AI Tokenization

8 episodes — 90-second audio overviews on ai tokenization.

Token economics — why every token has a price

API providers charge per input and output token; understanding tokenization directly impacts cost estimation, prompt design, and budget optimization.

Positional encoding — teaching word order to parallel models

Since transformers process all tokens simultaneously, position must be explicitly injected via sinusoidal functions or learned embeddings.

Word embeddings — turning tokens into vectors

Each token maps to a learned high-dimensional vector where semantic proximity in space encodes similarity in meaning.

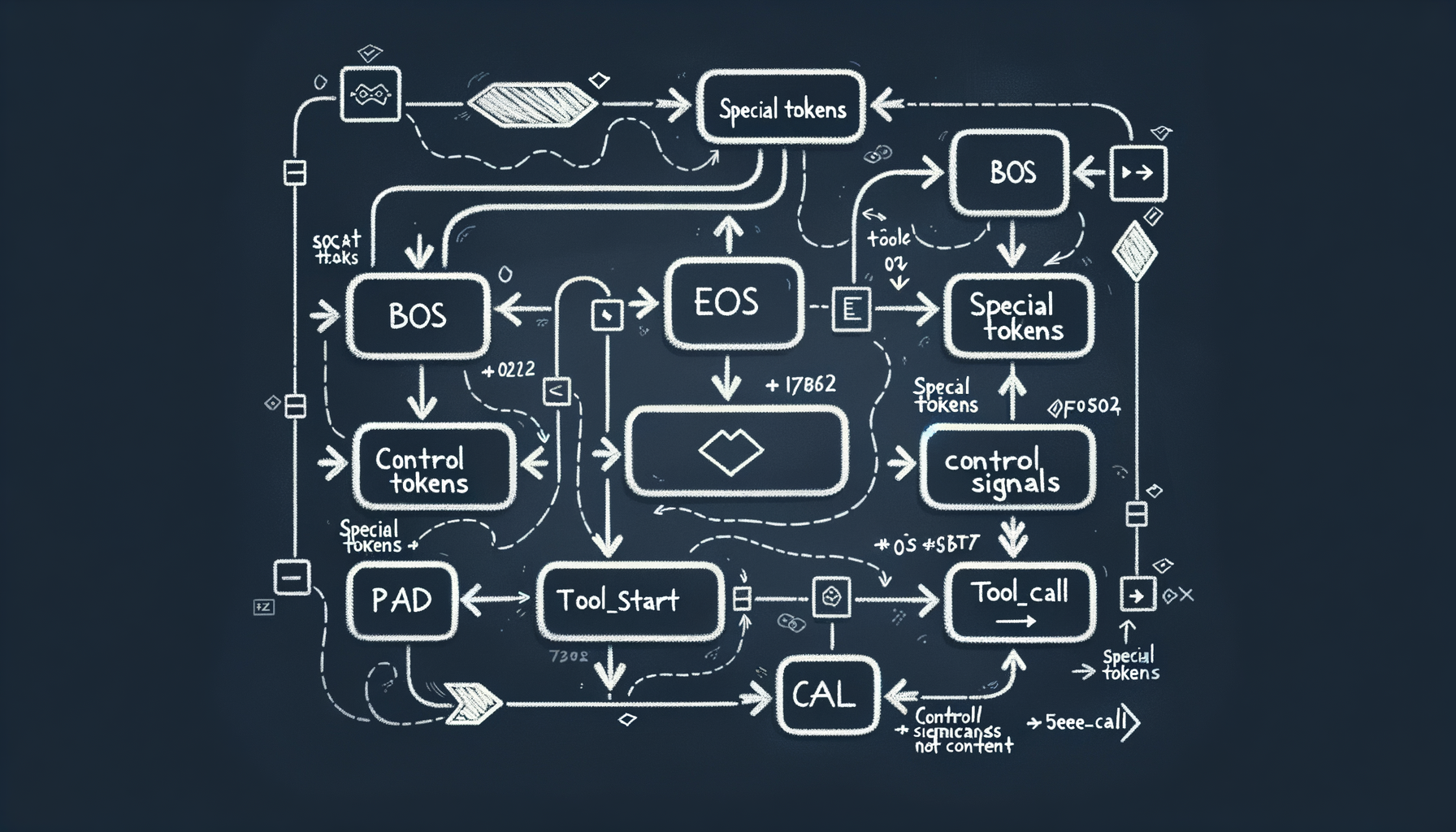

Special tokens — control signals for models

\[BOS\], \[EOS\], \[PAD\], \<\|im\_start\|\>, \<tool\_call\> — reserved tokens that mark boundaries, roles, and structure for the model.

Vocabulary size tradeoffs — why 32K, 50K, or 100K tokens

Larger vocabularies produce fewer tokens per text (cheaper inference) but require bigger embedding tables and more parameters to train.

SentencePiece & tiktoken — tokenizer implementations

SentencePiece (Google) and tiktoken (OpenAI) are the standard libraries for fast, language-agnostic tokenization used across model families.

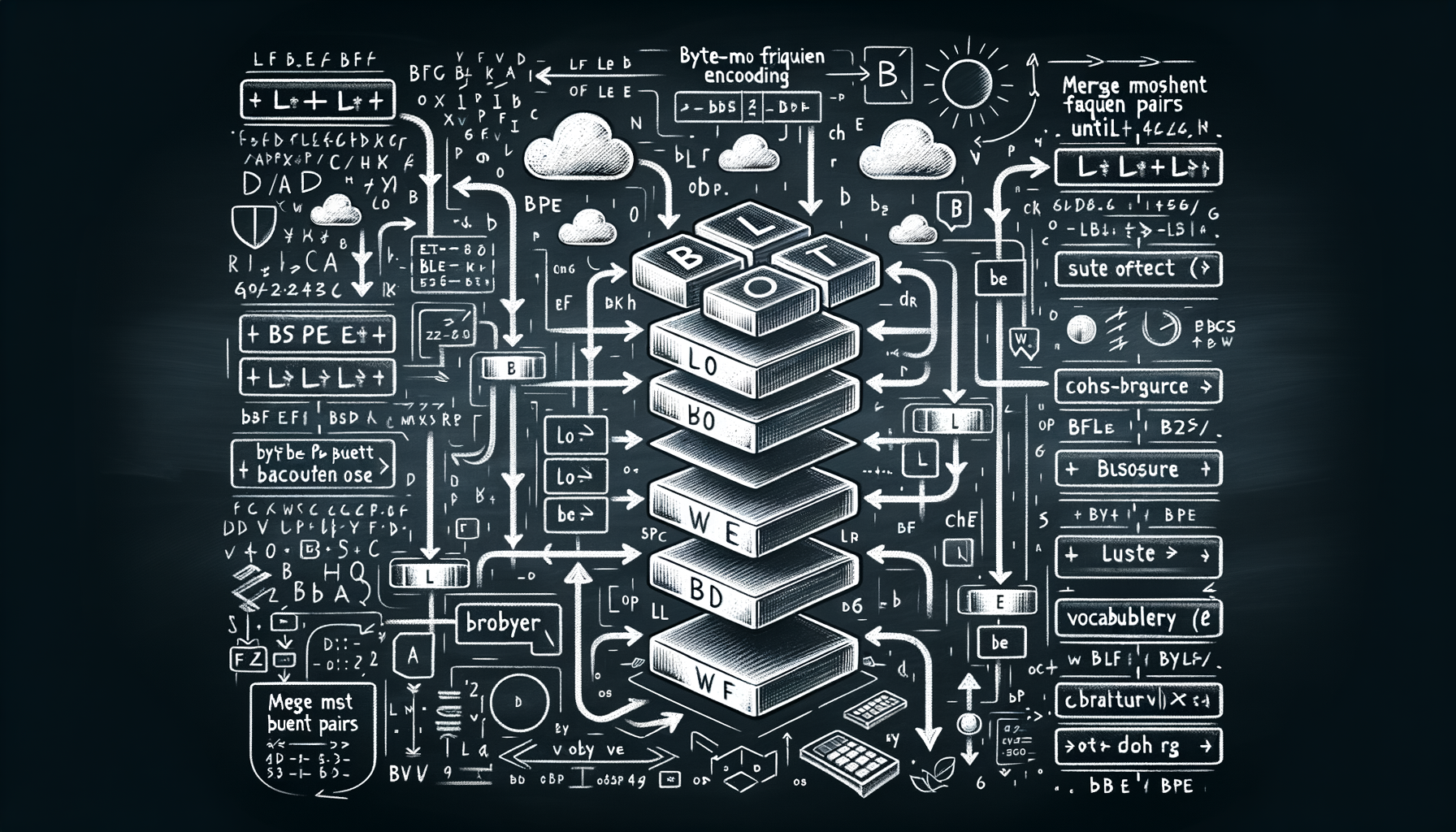

Byte-Pair Encoding (BPE) — how tokenizers learn to split text

Starting from individual bytes or characters, BPE iteratively merges the most frequent adjacent pairs until reaching a target vocabulary size.

What are tokens — the atoms of language models

Models don't see words or characters; they see tokens — subword units that balance vocabulary size with text coverage.