Back to Bytes

GenBodha Bytes

GenBodha Bytes

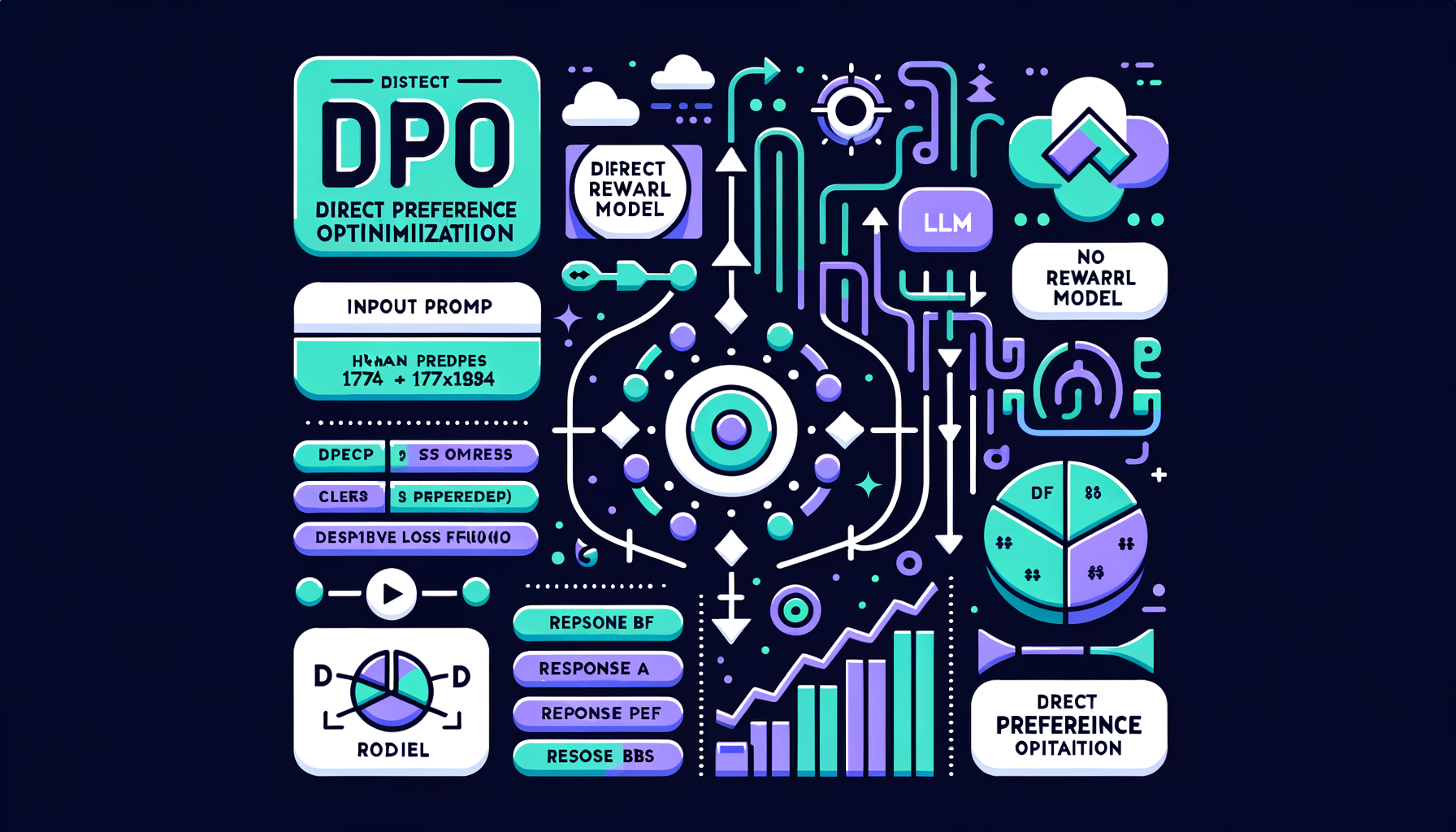

DPO — Direct Preference Optimization

Tap play · 90-second GenAI byte

0:00-1:23

Want to go deeper? Explore full courses with hands-on labs, quizzes, and chapter podcasts.

Tap play · 90-second GenAI byte

Want to go deeper? Explore full courses with hands-on labs, quizzes, and chapter podcasts.